Every company tells you they take security seriously. Some even take it very seriously. But do they? I started to think about this because of a recent Slack bug. I think there are a lot of interesting things we can look at to decide if a company is taking security seriously or if the company thinks security is just a PR problem. I’m going to call the behavior we want to look at “security signals”.

On August 28, 2020 a remote code execution (RCE) bug was made public from the Slack bug bounty program. This bug really got me thinking about how a company or project signals their security maturity to the world. Unless you’ve been living under a rock, you know Slack has become one of the most popular communication platforms of the modern era, one would assume Slack has some of the best security around. It turns out the security signals from Slack are pretty bad.

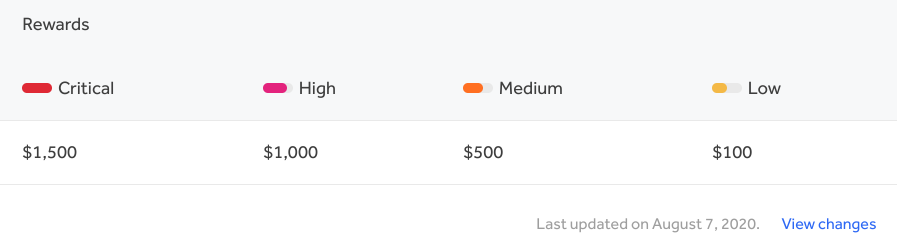

Let’s first focus on the Slack public bug bounty. Having a bug bounty is often a good sign that you consider security important. In the case of Slack however it’s a signal against. Their bounties are comically low.

To put this $1500 critical into context, Mozilla has a critical bounty of $10,000. Twitter has $20,000. I would expect something that is critical infrastructure to be much higher. The security signal here is security isn’t taken very seriously. It’s likely someone managed to convince leadership they need a bug bounty, but didn’t manage to convince anyone to properly staff and fund it. Not having a bug bounty is better than one that is being starved of resources.

The next thing I look for is a security landing page. In the case of Slack it’s https://slack.com/security. That’s a great address! The page doesn’t explicitly tell us they take security seriously so we’ll have to figure it out on our own. They do list their vast array of compliance certifications, but we all know compliance and security are not the same thing. The page has no instructions on how to report security vulnerabilities. No link to the bug bounty program. There are no security advisories. There are links to whitepapers. It looks like this page is owned by marketing. Once again we have a security signal that tells us the security team is not in charge.

Next I wanted to look at is how many CVE IDs have been assigned to Slack products? I could only find one: CVE-2020-11498. If I search for this CVE to be listed on slack.com I can’t find it anywhere. Slack has a number of libraries and a desktop client. If they took security seriously, they would have CVE IDs for their client so their customers can track their risk. I tried to find security advisories on the Slack web site and found a page owned by their legal team that does link to the bug bounty program. On the plus side that page does say “We take the security of your data very seriously at Slack.” They’re not just serious, they’re VERY serious!

I could continue looking for signals from Slack, but I think it’s more useful to focus on how this can happen and what can be done about it. There have been plenty of people discussing not letting marketing, or PR, or legal, or some other department be in charge of your security. This is of course easier said than done. A great deal of these problems are the result of the security team not understanding how to properly communicate with the business people. Security is not a tautology, security has to exist as part of the larger strategy.

Signaling Security

The single most important thing you need to learn is never NEVER try to scare people. Nobody likes to be scared. If you try to pull the “If you don’t do this monsters will eat the children” you’ve already lost. Fear does not work. If you have to, write “FEAR DOES NOT WORK” on your wall in huge red letters.

Now that we have that out of the way, let’s talk about what a bug bounty amount tells us. The idea behind a bug bounty isn’t to entice researchers to report issues to you instead of the black market. Anyone willing to sell exploits will always make more selling them illegally than you will pay. If you pay nothing or a terribly small amount you will still get reports for things people accidentally find.

The purpose of the bounty is to entice a security researcher into spending some time on your project. Good bugs take time, and the more time you spend the more good bugs you can find. It’s a bit circular. Think of these people as contract workers for a project you don’t even know about yet. The other thing you have to understand is if your bounty amounts are too low, that either means you couldn’t get a proper budget for your program, or you have so many critical bugs a critical has no value. Neither is a good signal.

How do you get enough budget for a proper bug bounty program? You have to frame a bug bounty as something that can enable business. A bug bounty seen as only a cost will always have to battle for funding. A proper bug bounty program signals to the world you take security seriously (you might not even have to tell everyone how serious you take it). It gives customers a sense of calm that shows you’re more than just some compliance certificates. It helps you gain unexpected contractors to help you find and fix bugs you may never find on your own. If you run the numbers it will be drastically more expensive to let one of your full time people hunt for bugs, and you still have to pay them if they don’t find anything!

What about having a security contact page? It’s a great tool for marketing to convince security minded customers how seriously you take security! This again comes back to the business value of having a proper security landing page. This answer will be very cultural. If your business values technical users, you use this as a way to show how smart your security people are. If you value transparency, you show off how open and honest your security is. This is one of those places you have to understand what your company considers business value. If you don’t know this, that’s going to be your single biggest problem to solve before worrying about any other security problems. Make your security landing page reflect the business strategy and culture of your company.

What about CVE IDs? Should your company issue CVE IDs for your products? I would reframe this one a little bit. CVE IDs are great, but they’re really the second step in publishing security advisories. Advisories first, CVEs second. Security advisories are a way to communicate important security details to customers. Advisories show customers you have a competent security team on the inside. Good security advisories are hard. If you have zero why is that? No product has zero security vulnerabilities.

There is an external value to having a team responsible for advisories. If you have a product your customers are running 3rd party scanners against it. It’s the cool new thing to do. Do you know what those scans look like? Are you staying ahead of the scanners? Are you publishing details for customers about what you’re fixing? Security advisories aren’t the goal, the goal is to have a product that has someone keeping ahead of industry standard security. The industry standard says security advisories and 3rd party scanners are now table stakes.

This post is already pretty long and I don’t want to make it any longer, every one of these paragraphs could be a series of blog posts on its own. The real takeaway here is that security needs to work with the business. Show how you make the product better and help with the bottom line. If security is seen only as a cost, it will always be a battle to get the funding needed. It’s not up to the business leaders to figure out security can help the bottom line, it’s up to security leadership to show it. If you just expect everyone else to make security a big deal, you’re going to end up with marketing owning your security landing page.

And I want to end on an important concept that is often overlooked. If there is something you do, and you can’t prove adds to the bottom line, you need to stop doing it. Focus on the work that matters, eschew the work that doesn’t. That’s how you take security seriously, not by putting it on a web site.